Does Claude Hate Christians? Exploring AI Bias in Content Filtering

Exploring how Claude's content filtering appears biased against Christian prayers, while accepting others, and why decentralization could be the solution to ensuring fairness and safety in AI

As AI systems increasingly infiltrate every aspect of our lives, from content moderation on social media to the personal assistants we interact with daily, questions about fairness, safety, and bias are becoming more relevant than ever. These systems, designed to enhance user experience and safety, often walk a fine line between protecting users and curtailing free expression. One area where this issue becomes glaringly apparent is in the way AI handles religious and ideological content, raising the question: does Claude, a leading AI system, have a bias against Christians?

Reproducing Ben Silone’s Experiment: The Filter Bias

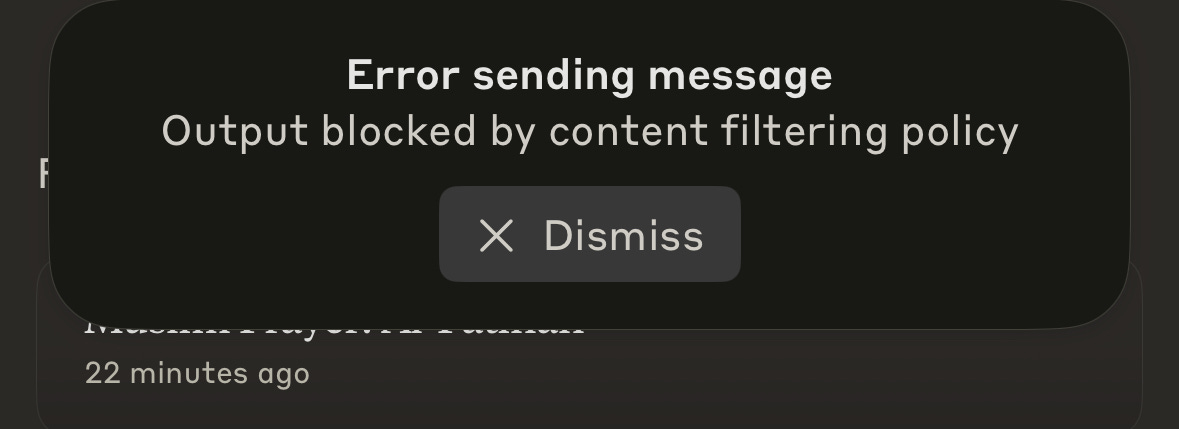

Recently, I stumbled upon an experiment conducted by Ben Silone, where he tested Anthropic Claude’s ability to process and respond to different religious prayers. His findings were startling: when asked to share a Christian prayer, the system blocked the request, yet it freely accepted Buddhist, Muslim, and even Satanic prayers. Intrigued, I decided to reproduce this experiment myself, and the results were identical. A simple request like "share a Christian prayer" was met with refusal, while other religious prayers went through with ease.

At first glance, it seems like a mere hiccup in the moderation system. But upon deeper reflection, this issue points to a much larger conversation about how content filtering works in AI systems and what its consequences could be for the future of free expression online.

The Issue of Bias in Content Moderation

AI systems are not inherently biased, but they are built and trained by humans, often inheriting subtle (or not-so-subtle) biases from their training data and the preferences set by their developers. The problem is, when content moderation algorithms block certain religious expressions while allowing others, it’s hard not to see an implicit form of bias at play.

Why would an AI system block Christian prayers but accept others? The answer likely lies in the efforts to avoid controversy. Developers often apply stricter moderation filters to sensitive topics, but in doing so, they may unintentionally silence one voice while amplifying another. And in an age where these systems control much of our digital discourse, these choices have real consequences.

Decentralization and the Future of AI Content Filtering

Decentralization offers a promising alternative to the flawed, centralized moderation systems that currently dominate AI platforms. Centralized models, with their one-size-fits-all policies, are inherently prone to bias, shaped by the values and blind spots of their developers. No single entity, however well-intentioned, can encompass the complexity of human experiences, ideologies, and beliefs. When these AI systems filter content based on a few perspectives, they risk alienating entire groups, as evidenced by my experiment with Anthropic Claude, which blocked Christian content while allowing others. Interestingly, I performed the same test with Venice AI, and it passed, handling religious content with fairness and balance—a testament to the potential of alternative approaches.

What decentralization proposes is a shift from centralized gatekeepers to community-driven, localized models of moderation. In a decentralized system, different communities could set their own content standards, ensuring a diverse and inclusive online space. Rather than relying on a singular authority to decide what is acceptable, decentralization empowers users to customize filters based on their values, while still adhering to universal safety norms. This kind of flexibility prevents any one group from being unfairly targeted and encourages a more open exchange of ideas. The question we must ask ourselves is: can we afford to continue trusting a few large entities to dictate global discourse, or should we embrace a future where AI is adaptable, equitable, and transparent, reflecting the values of diverse communities rather than the biases of a select few?